IBM Unveils Breakthrough Analog AI Chip Designed for Energy-Efficient Deep Learning

IBM Research demonstrates a novel analog AI processor that performs neural network computations directly in memory, achieving dramatic energy savings compared to conventional digital GPU architectures.

Key Takeaways

IBM Research has unveiled an analog AI chip that performs neural network computations directly in memory, eliminating the data-transfer bottleneck of traditional digital processors. The approach could dramatically reduce AI energy consumption compared to GPU-based systems.

IBM Research has revealed a breakthrough analog AI chip that performs neural network computations directly within memory arrays — eliminating the energy-intensive data movement between separate processor and memory units that dominates power consumption in conventional digital AI chips. The technology represents a fundamentally different approach to AI hardware that could dramatically improve the energy efficiency of deep learning inference workloads.

Compute-in-Memory: A Paradigm Shift

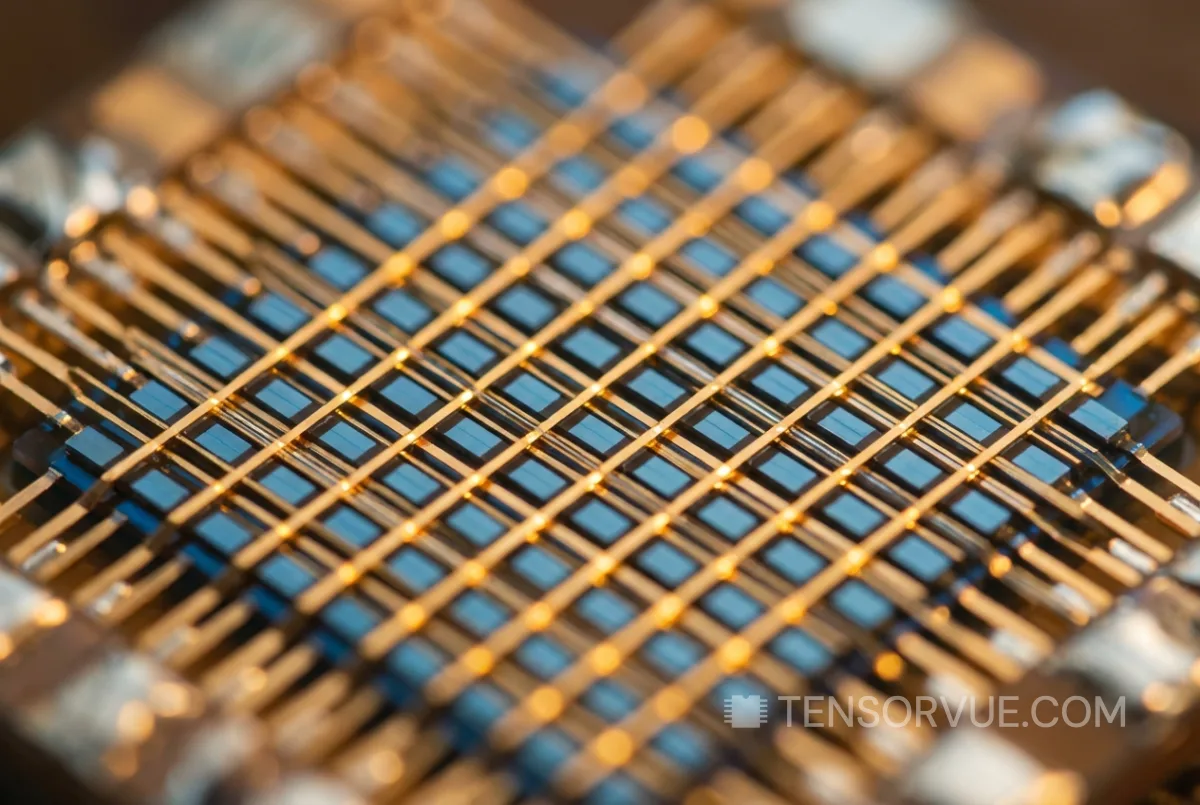

Traditional digital AI processors — including Nvidia GPUs and Google TPUs — operate by shuttling data between compute cores and memory chips. This data movement, known as the 'memory wall,' consumes the majority of energy in AI workloads and creates performance bottlenecks. IBM's analog approach sidesteps this limitation entirely by encoding neural network weights directly into the resistance states of non-volatile memory (NVM) devices arranged in crossbar arrays.

When input signals are applied to the crossbar array, the mathematical operations required for neural network inference — primarily matrix multiplications — occur naturally through the physics of electrical currents flowing through resistive elements. This means the computation happens where the data is stored, at the speed of electrical signal propagation, with minimal energy overhead.

Why It Matters for the AI Industry

The energy consumption of AI data centers has become a critical concern for the industry. Total data center energy demand is projected to consume 3-4% of global electricity by 2030, with AI workloads representing the fastest-growing segment. Any technology that can deliver equivalent AI performance at significantly lower power consumption has enormous economic and environmental value.

IBM's analog approach is particularly promising for AI inference — the process of running trained models in production, which accounts for the vast majority of commercial AI compute. While analog computing introduces small accuracy variations compared to digital precision, IBM has demonstrated techniques to compensate for these variations while maintaining model accuracy within acceptable bounds.

Comparison with Current AI Hardware

| Architecture | Approach | Strength | Limitation |

|---|---|---|---|

| Nvidia GPU (Digital) | Massively parallel digital compute | Training + inference versatility | High power, memory wall bottleneck |

| Google TPU (Digital) | Domain-specific digital accelerator | Optimized for tensor operations | Less flexible than GPUs |

| IBM Analog AI | Compute-in-memory via NVM crossbars | Dramatic energy efficiency | Currently best suited for inference only |

| Neuromorphic (e.g., Intel Loihi) | Brain-inspired spiking neural networks | Ultra-low power for sparse workloads | Limited model compatibility |

IBM's analog chip remains in the research phase and is not yet commercially available. However, the demonstration provides a credible path toward AI hardware that could deliver inference performance at a fraction of the energy cost of current digital systems — a capability that could reshape the economics of AI deployment as the industry's power consumption comes under increasing scrutiny.